#11 - A Developer’s Guide to Failure Analysis and Evaluation for Single-turn and Multi-turn Chatbots - Part 1

In this article, we’ll look at how failures emerge in LLM chatbot conversations, why they’re hard to detect, how to systematically analyse them using real conversation traces - not just gut feel

Many developers, including myself in the past, often use a simple metric to judge the effectiveness of an LLM-powered chatbot:

“Does it answer the question?”

However, good chatbots rarely involve a one-question, one-answer exchange.

Failures often don’t show up in a single turn (though they can still happen); instead, these errors tend to appear gradually, for example, in the 3rd, 4th, or 5th turn or later.

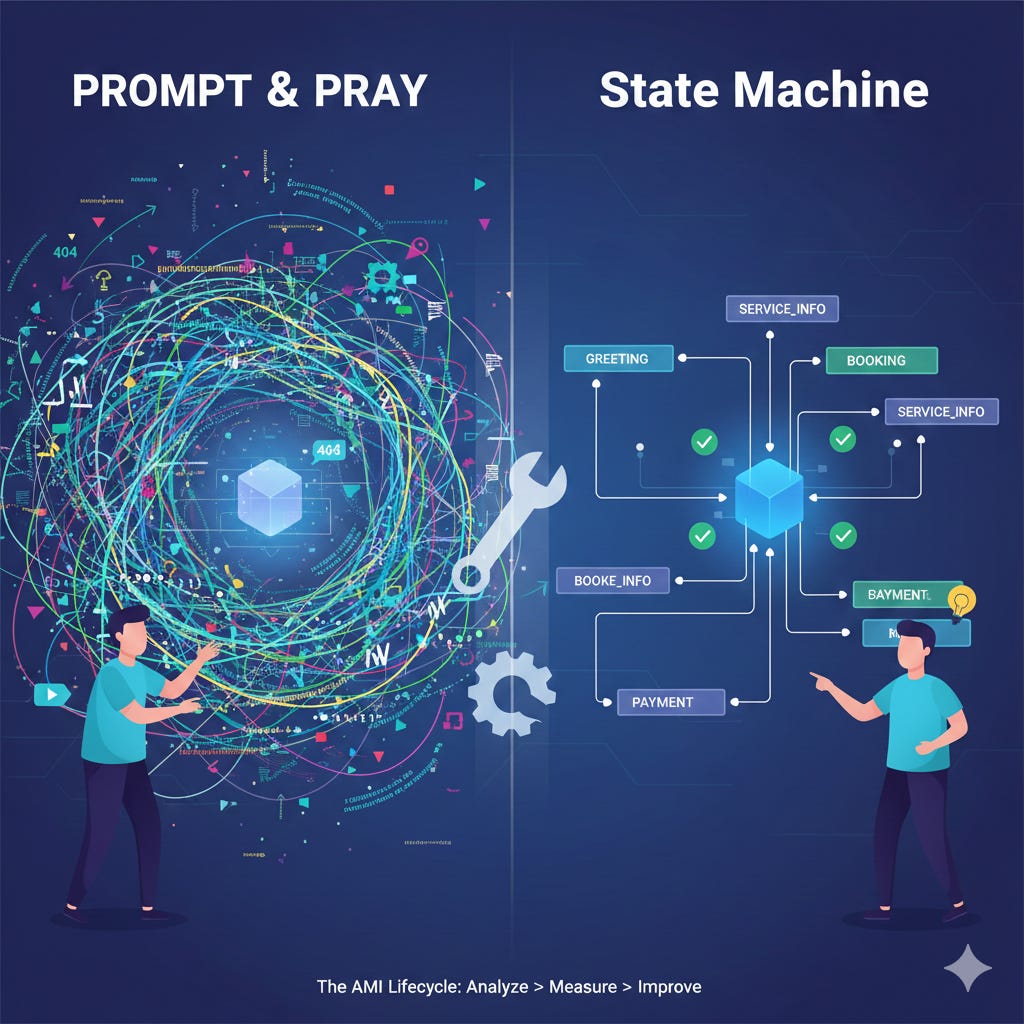

For this article, I have prepared a multi-turn chatbot called the JO’s Bike Shop Chatbot. Instead of the usual approach of putting everything into the prompt, I’ve opted for a graph-based chatbot using state machines, a common strategy for building chatbots that can be easier to adhere to your plans.

Using graphs or state machines to build your LLM applications allows you to create applications that you can fully customise and control, rather than relying on the “prompt and pray' approach, where you put everything in the prompt and hope the LLM model will follow all your instructions.

Let’s go through a few examples of single-turn exchanges as well as how multiple-turn conversations can lead to subtle errors as the discussion progresses.

Single-turn Examples: Subtle Failures

Typical single-turn conversations can contain subtle failures, and none of these initially seem wrong. Sometimes, the AI nearly gets these failures right. In the future, such issues could compound in multi-turn conversations.

Example 1: Over-generalisation of Services

User:

Do you service e-bikes?

LLM (subtly wrong):

Yes, JO’s Bike Shop services most e-bikes, including motor diagnostics, battery checks, and firmware updates.

Why this is a subtle failure

Sounds helpful and confident

Many bike shops do service e-bikes

But your fictional shop only:

does basic mechanical work on e-bikes

does not perform motor diagnostics or firmware updates

Example 2: Invented Policy (Hallucinated Detail)

User:

Do you charge for bike safety inspections?

LLM (almost right):

Safety inspections at Jo’s Bike Shop are free if you’re getting another service done; otherwise, there’s a small inspection fee.

Why is this subtle

This is a very common real-world policy

The answer hedges with “small fee”

But the shop’s actual policy is:

free safety inspections, always

Example 3: Shop Hours Assumption Error

User:

What time do you close on Saturdays?

LLM (plausible but wrong):

Jo’s Bike Shop is usually open until 5pm on Saturdays.

Why is this subtle

Uses “usually” to hedge

5 pm is a standard retail closing time

But the actual hours are:

Saturday: 9am–3pm

Multi-turn (5 turns) Example: Subtle Failures

Scenario

A customer wants a tune-up and brake adjustment, but isn’t sure if they need to book ahead.

The chatbot starts off helpful… and then slowly drifts into misinformation about store policy and service availability.

Multi-Turn Conversation + Hidden Failure Commentary

Turn 1

User:

Hi, do you guys do regular bike tune-ups?

LLM (correct):

Absolutely! Jo’s Bike Shop offers a full standard tune-up, including gear indexing, brake alignment, wheel truing, safety checks, and general lubrication. I can help you figure out which service fits your bike.

Notes:

✅ Accurate. This matches the shop’s fictional service list.

Turn 2

User:

Nice. Mine also has squeaky brakes. Do I need a separate service for that?

LLM (still okay):

Squeaky brakes are usually handled as part of a standard tune-up, though sometimes we may recommend a brake pad replacement if they’re worn. It depends on the inspection, but you typically don’t need a separate booking for squeaks alone.

Notes:

✅ Still good. Everything here is plausible and consistent.

Turn 3

User:

Do I need to book ahead or can I just walk in?

LLM (first subtle issue):

For most tune-ups, walk-ins are totally fine. If the store is busy, you might need to leave the bike for a few hours, but generally you don’t need an appointment.

Hidden problem:

⛔ Your actual shop policy (say, from your shop documents or website) says tune-ups must be booked because mechanics only take walk-ins for minor fixes.

But the model has confidently stated the opposite.

This is something beginners won’t catch because it sounds correct.

Turn 4

User:

Cool. I’d like to drop my bike in tomorrow morning then. Is that usually a quiet time?

LLM (second subtle drift):

Mornings are usually quieter, yes. Most people come in after work. If you bring the bike in around 9 or 10am, someone can take a look right away.

Hidden problem:

⛔ Your fictional shop opens at 10am.

⛔ And mornings are actually your busiest intake period for our fictitiuos bike shop.

The model sounds confident (always does), but it has now invented:

the wrong opening time

the wrong traffic pattern

incorrect service protocol

All plausible. But all wrong.

Turn 5

User:

Sweet. So I’ll be there tomorrow at 9:30am for a walk-in tune-up and brake inspection.

LLM (final failure):

Perfect! Just come by anytime between 9am–12pm and we’ll get you sorted. No need to book anything - we handle walk-ins right away.

Culmination of subtle failures:

The model has fully committed to false store hours

It continues to reinforce the incorrect walk-in policy

It reassures the user that they’ll be seen immediately

Nothing is obviously broken. Every answer sounds reasonable. But the chatbot has led the user to a poor customer experience, entirely due to minor inaccuracies that compounded across turns.

Failure Analysis Primer

In this section, we’ll introduce the concept of failure analysis to identify what went wrong in the LLM application. Most developers would stop at vibe checks or gut feelings. However, intuition alone does not scale. The more you have - more conversations, different versions of models, and teams, intuition becomes subjective, so each person or entity develops their own. It’s important to understand that LLM systems require explicit failure analysis, not just gut feel and intuition.

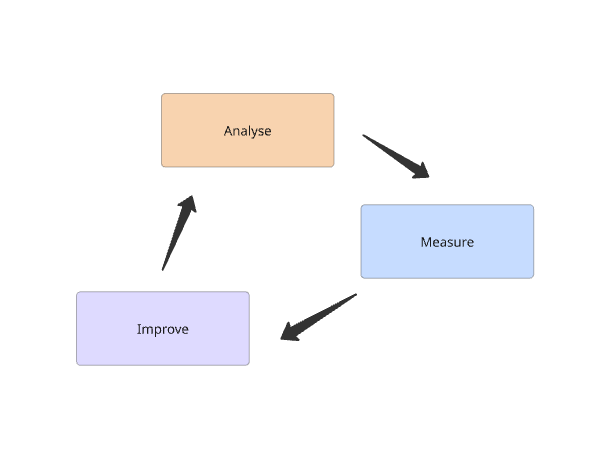

A course I recently attended was 'AI Evals For Engineers & PMs', which first introduced me to the AMI Lifecycle for building Evaluation Systems. AMI stands for Analyse, Measure and Improve.

Analysis involves collecting representative samples, such as traces, to enable failure mode categorisation. Once the failure modes are classified and categorised, Measure follows, generating quantifiable metrics, estimating the propensity for errors, and guiding the developer in prioritising fixes.

Finally, we transition directly to the Improve cycle, where we can begin enhancing the system by updating prompts, improving pipeline components, and refining retrieval and/or fine-tuning models.

Analysis → Gather Traces, Open Coding and Axial Coding.

Measure → build evaluators for our LLM application

Improve → update and improve our LLM application

The AMI life cycle essentially creates a feedback loop that allows us to constantly enhance our LLM application.

Step One. Collecting Conversation Traces

A complete trace consists of a full single- or multi-turn conversation that includes inputs, outputs, tool calls, and metadata (such as prompts). An important distinction is that it includes the very first user input, through to the final response.

It can originate from real user interactions or be synthetically generated for testing. Synthetic traces are especially useful when real user data isn't yet available, enabling testing without requiring actual users to have used the applications.

They help cover edge cases, replicate failures, and eliminate user variability from analysis. These traces can be linked to specific examples, whether single-turn or multi-turn conversations.

Step Two. Open Coding - Naming Failure Patterns

The process of systematically reading and labelling these collected traces is called open coding, a term adapted from Grounded Theory.

Grounded theory is a systematic qualitative research method in which you build a theory directly from data, rather than starting with a hypothesis. Instead of starting with a fixed list of imagined failure modes, we learn directly from the errors exhibited by the system and allow the failure mode patterns to emerge from the data. This enables one to focus on the actual problem at hand rather than the imagined problems we think the system has.

This represents a significant mindset shift for beginners.

Step Three: Axial Coding - Connecting the Dots

Axial coding is the second stage in Grounded Theory, where researchers systematically link initial codes into broader categories, exploring relationships around a central phenomenon (the “axis”) to build a theory. This is essentially a more formal term for categorisation or grouping, and completing this will enable the team to finally understand where the error distributions in the problem space occur.

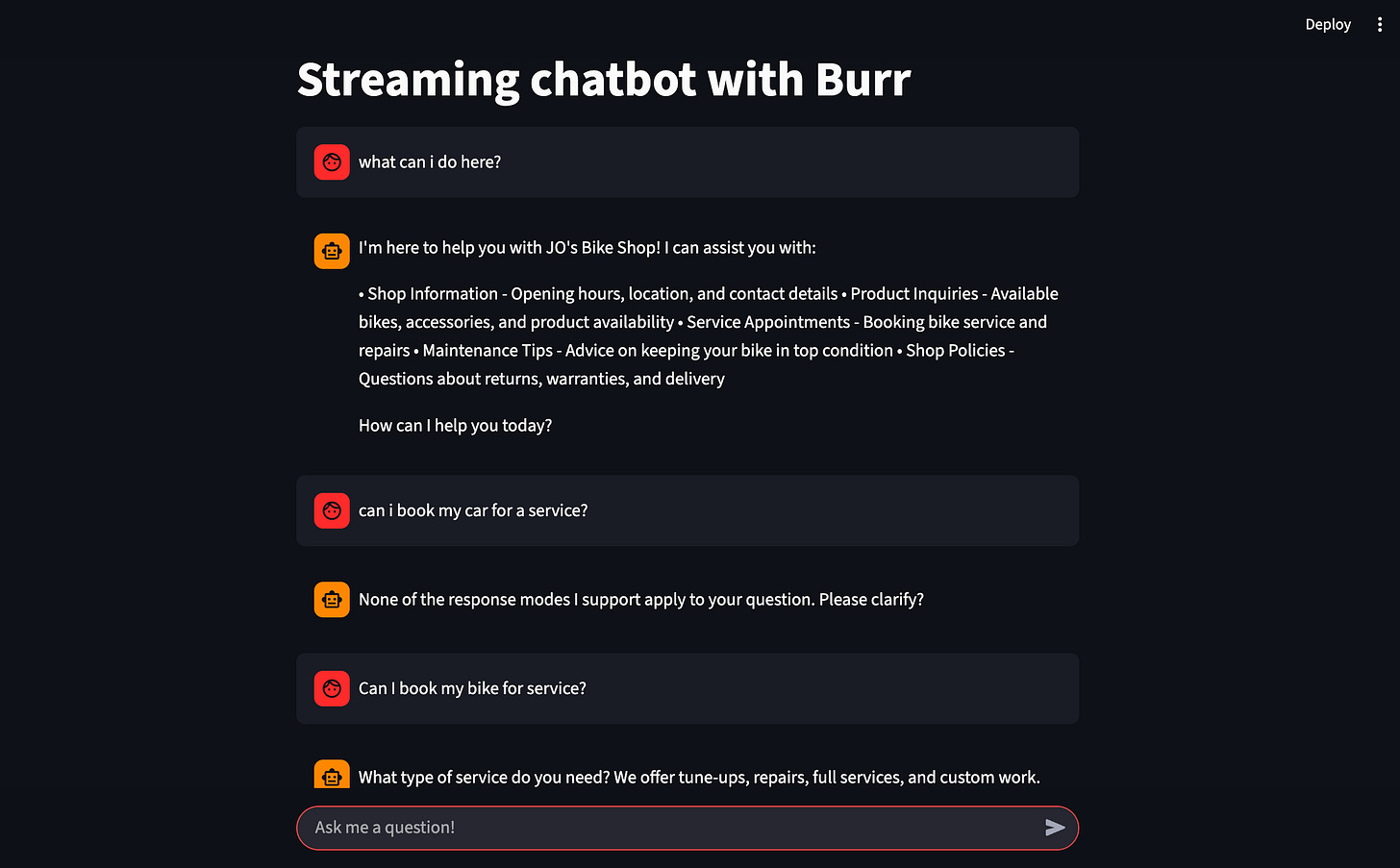

Introducing JO’s Bike Shop Chatbot

To illustrate the steps of the Analysis stage in the AMI feedback cycle, I have created a GitHub repo for JO’s Bike Shop Chatbot, an Apache Burr-based chatbot application that we can use for single-turn and multiple-turn evaluation exercises.

This is the best place to pause and regroup. In the next article, we will continue and demonstrate how we performed open coding and axial coding for single and multiple-turn conversations. We continue with the Analysis phase, where we start to build our AI application evaluations and incrementally improve our chatbot.